转载自 Thariq 的文章,原文链接在 X,转载请注明来源!

页面底部有本篇文章的原文!

从构建 Claude Code 中学到的经验:像 Agent 一样看世界

(简体中文版本,翻译来自Opus4.6,图片未进行翻译)

构建 Agent 框架最困难的部分之一,就是构造它的动作空间。

Claude 通过工具调用(Tool Calling)来执行操作,但在 Claude API 中,工具可以通过 bash、技能(skills)以及最近推出的代码执行等基础能力以多种方式构建(关于 Claude API 中程序化工具调用的更多内容,请参阅 @RLanceMartin 的新文章)。

面对这么多选择,你该如何设计 Agent 的工具?你只需要一个工具,比如代码执行或 bash 就够了吗?如果你有 50 个工具,每个对应 Agent 可能遇到的一种用例,又会怎样?

为了让自己站在模型的角度思考,我喜欢想象自己面对一道难题。你会想要什么工具来解决它?这取决于你自身的能力!

纸笔是最低要求,但你会受限于手动计算。计算器会更好,但你需要知道如何使用那些高级功能。最快、最强大的选择是一台电脑,但你得知道如何用它来编写和执行代码。

这是一个设计 Agent 的实用框架。你要给它与其自身能力相匹配的工具。但你怎么知道它有什么能力呢?你去观察、阅读它的输出、反复实验。你学会像 Agent 一样看世界。

以下是我们在构建 Claude Code 的过程中,通过观察 Claude 所学到的一些经验。

改进信息引出能力与 AskUserQuestion 工具

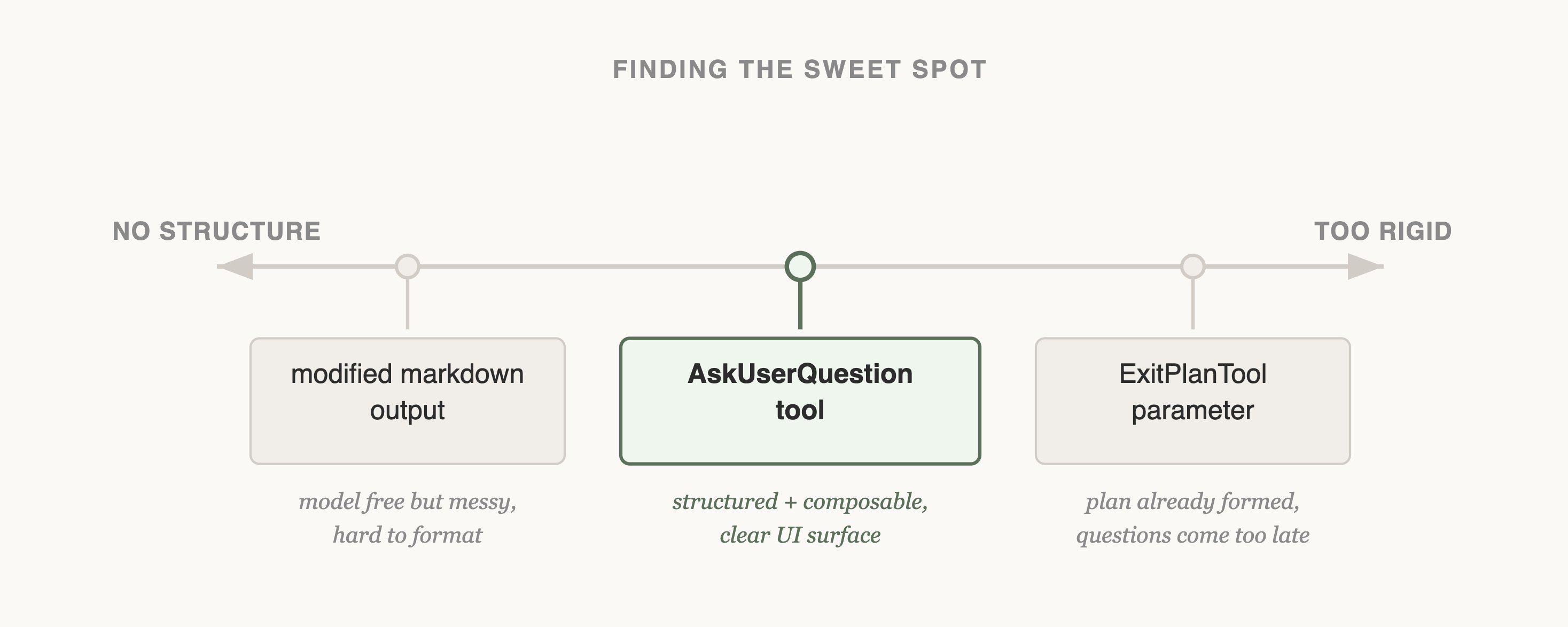

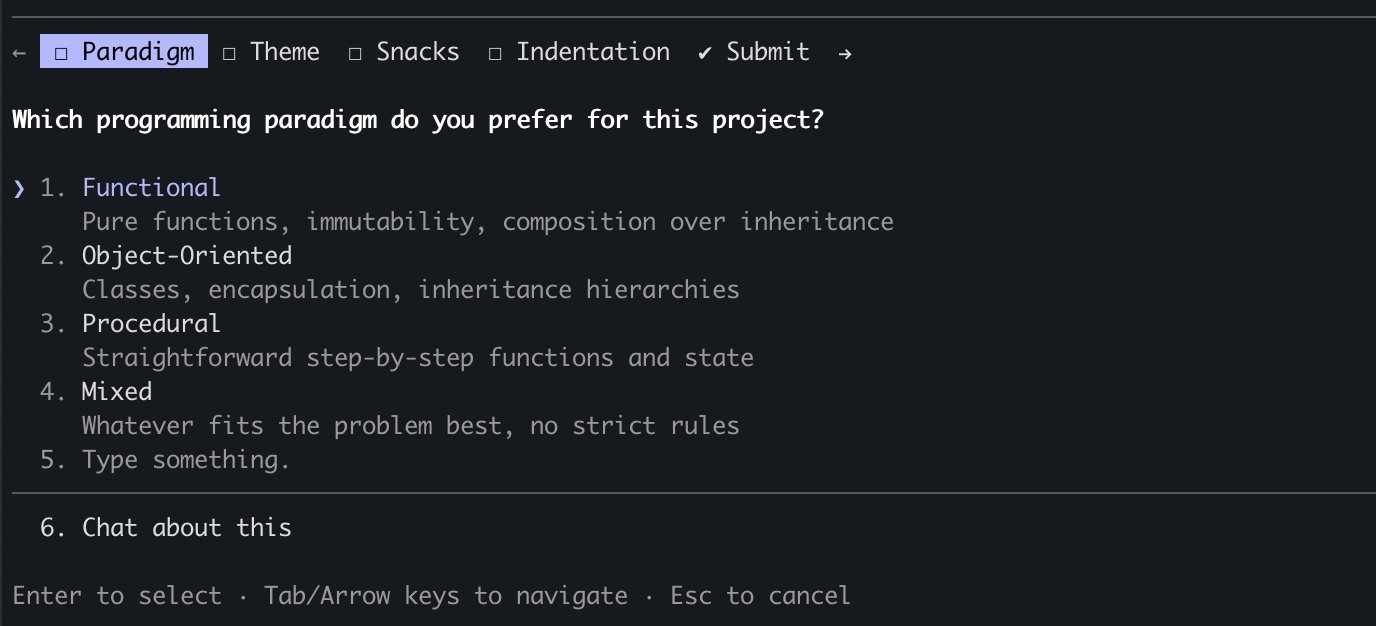

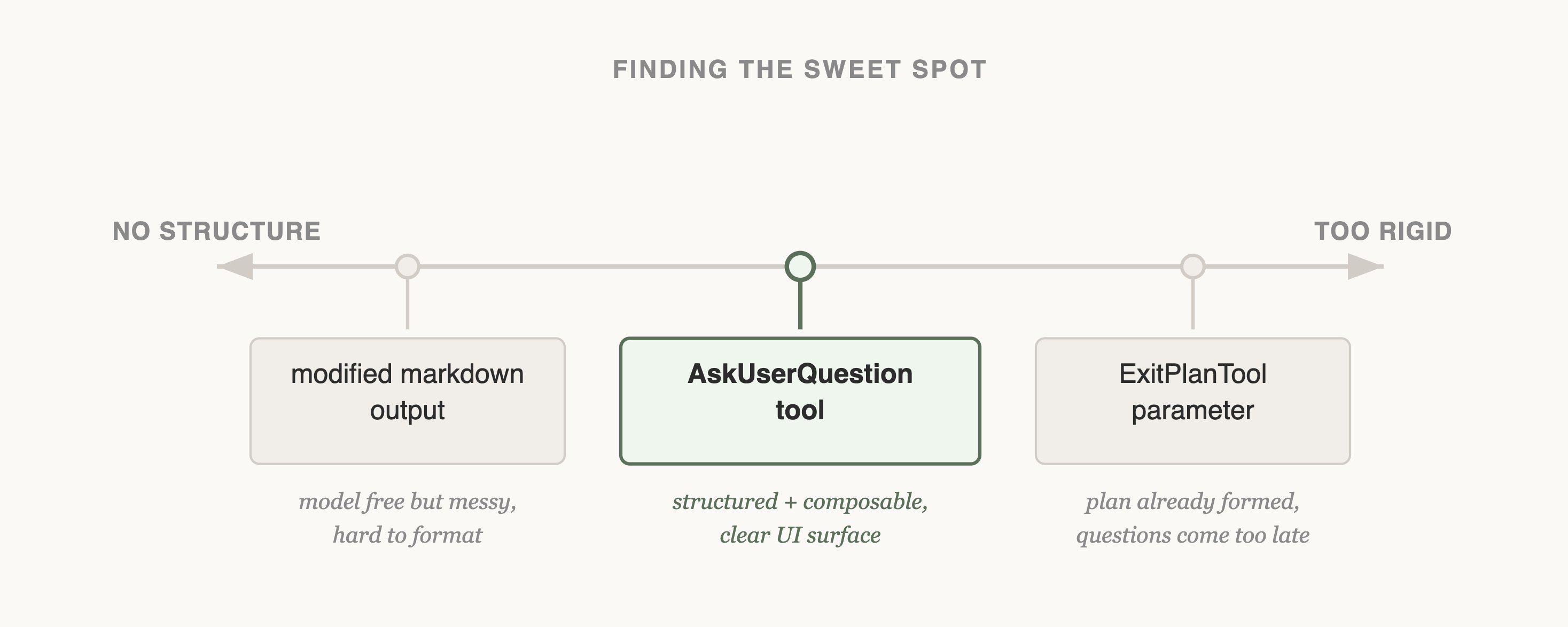

在构建 AskUserQuestion 工具时,我们的目标是提升 Claude 提问的能力(通常称为”引出”,elicitation)。

虽然 Claude 可以直接用纯文本提问,但我们发现回答这些问题感觉耗费了不必要的时间。我们该如何降低这种摩擦,增加用户与 Claude 之间的沟通带宽?

尝试 #1 - 修改 ExitPlanTool

我们首先尝试的是在 ExitPlanTool 中添加一个参数,让它在计划旁边附带一组问题。这是最容易实现的方案,但它让 Claude 感到困惑,因为我们同时要求它给出一个计划和一组关于该计划的问题。如果用户的回答与计划内容矛盾怎么办?Claude 是否需要调用两次 ExitPlanTool?我们需要另一种方案。

(你可以在我们关于 prompt 缓存的文章中了解更多关于我们为什么创建 ExitPlanTool 的内容)

尝试 #2 - 更改输出格式

接下来,我们尝试修改 Claude 的输出指令,让它输出一种经过轻微修改的 markdown 格式来提问。例如,我们可以要求它输出一个带有方括号选项的要点式问题列表,然后我们解析并格式化这些问题作为用户界面展示给用户。

虽然这是我们能做的最通用的改动,Claude 在输出这种格式方面看起来也还行,但这并不能保证一致性。Claude 会附加多余的句子、遗漏选项,或使用完全不同的格式。

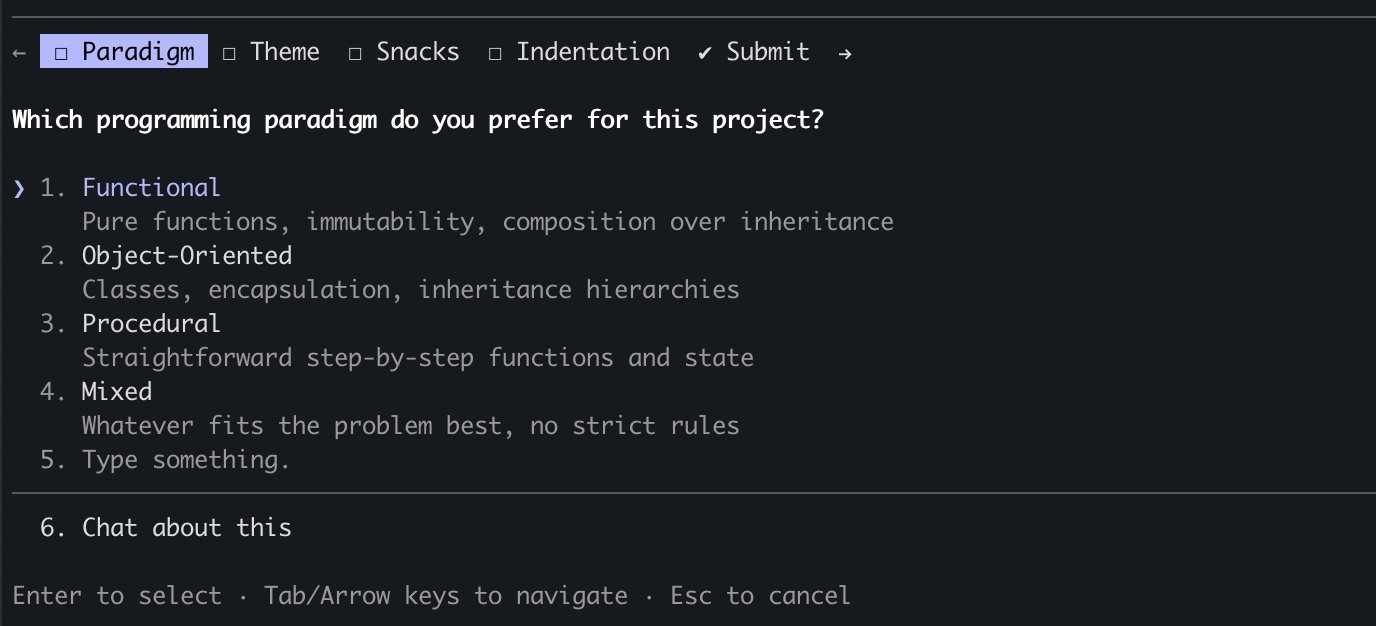

尝试 #3 - AskUserQuestion 工具

最终,我们决定创建一个 Claude 可以在任何时候调用的工具,但我们特别在计划模式下提示它使用该工具。当工具被触发时,我们会显示一个模态框来展示问题,并阻塞 Agent 的循环直到用户回答。

这个工具让我们能够提示 Claude 产出结构化的输出,并帮助我们确保 Claude 给用户提供了多个选项。它还给了用户组合使用这个功能的方式,例如在 Agent SDK 中调用它,或在技能(skills)中引用它。

最重要的是,Claude 似乎很乐意调用这个工具,而且我们发现它的输出效果很好。即使是设计得最好的工具,如果 Claude 不理解如何调用它,也是不起作用的。

这是 Claude Code 中信息引出的最终形态吗?我们不确定。正如你将在下一个例子中看到的,对一个模型有效的方案,对另一个模型未必是最佳的。

随能力升级而更新 - 任务与待办事项

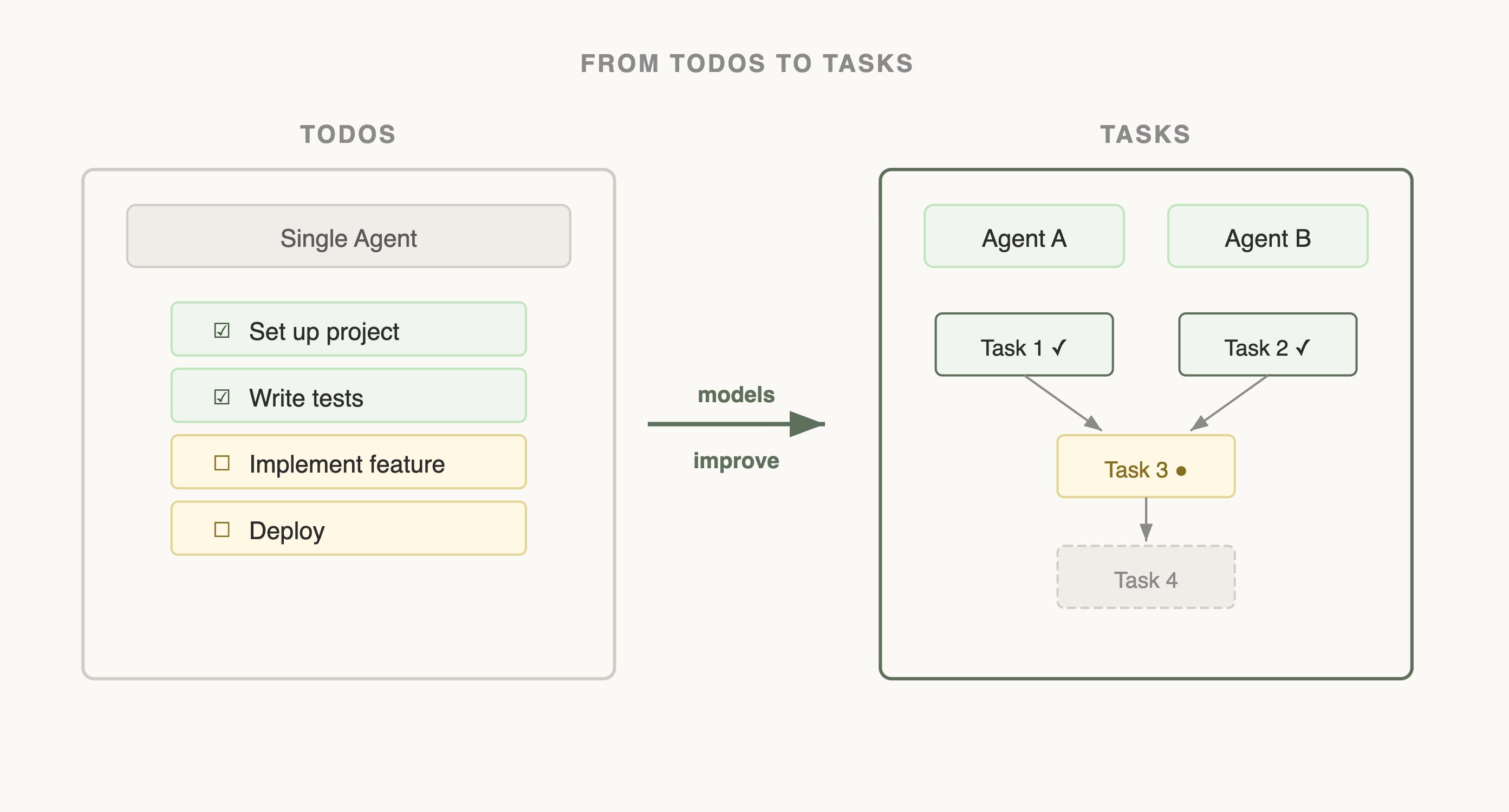

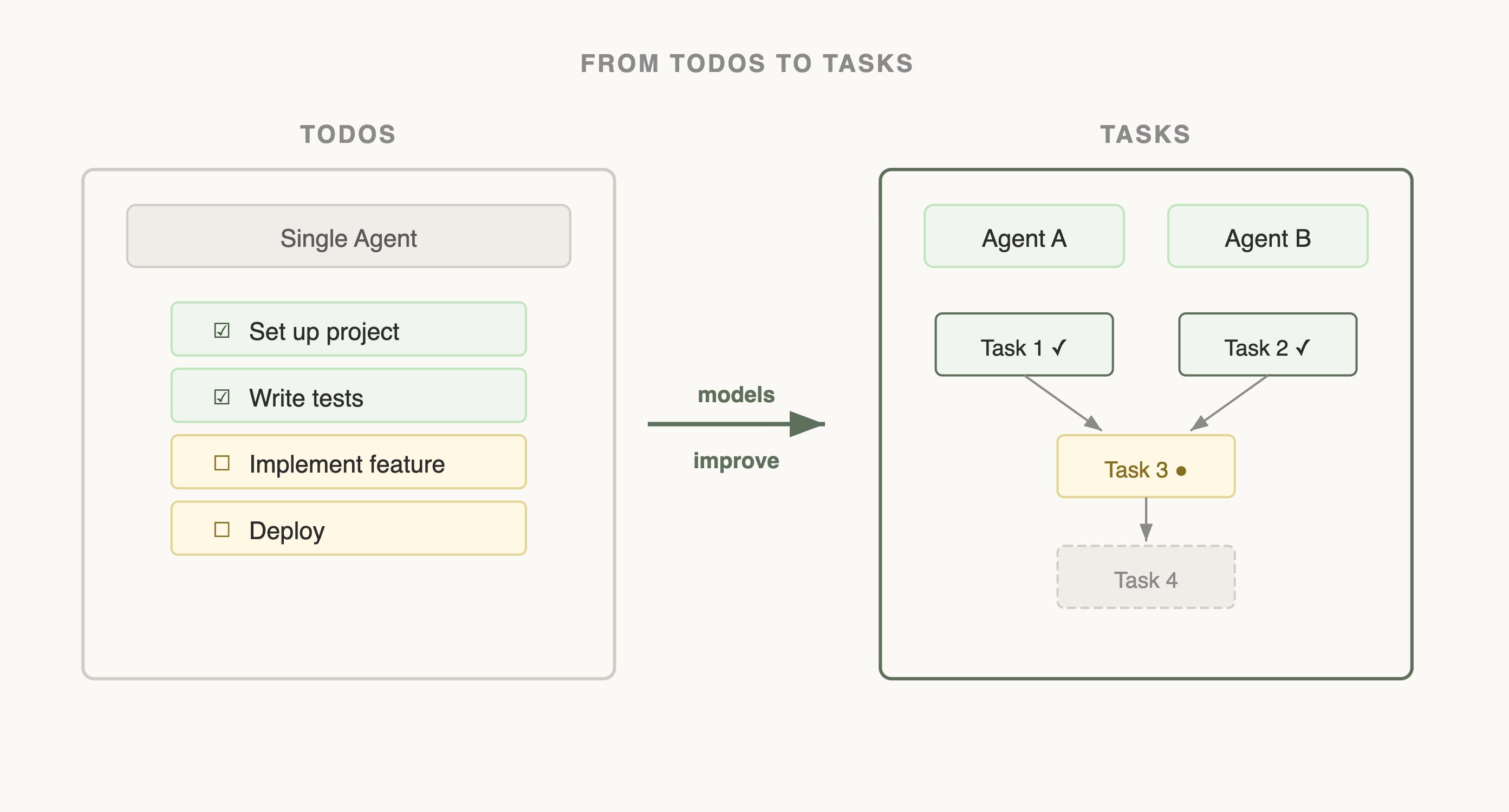

当我们首次发布 Claude Code 时,我们意识到模型需要一个待办事项列表来保持它的专注。待办事项可以在开始时写好,然后随着模型完成工作逐项勾选。为此,我们给 Claude 提供了 TodoWrite 工具,它可以编写或更新待办事项并展示给用户。

但即便如此,我们仍然经常看到 Claude 忘记它需要做什么。为了应对这个问题,我们每隔 5 轮插入系统提醒,提醒 Claude 它的目标。

但随着模型的改进,它们不仅不再需要被提醒待办事项列表,反而可能觉得它是一种限制。被发送待办事项列表的提醒会让 Claude 认为它必须严格遵循列表,而不是去修改它。我们还看到 Opus 4.5 在使用子代理(subagents)方面有了很大进步,但子代理之间如何协调共享的待办事项列表呢?

看到这些,我们用任务工具(Task Tool)替换了 TodoWrite(在这里了解更多关于任务的内容)。待办事项的目标是让模型保持在正轨上,而任务则更多是帮助 Agent 之间相互沟通。任务可以包含依赖关系、在子代理之间共享更新,而且模型可以修改和删除它们。随着模型能力的提升,你的模型曾经需要的工具现在可能反而在约束它们。不断重新审视之前关于需要哪些工具的假设是很重要的。这也是为什么坚持支持一小组能力特征相近的模型是有用的。

设计搜索接口

对 Claude 来说,一组特别重要的工具是搜索工具,它们可以用来构建自身的上下文。

Claude Code 刚推出时,我们使用 RAG 向量数据库来为 Claude 查找上下文。虽然 RAG 强大且快速,但它需要索引和设置,并且在各种不同的环境中可能很脆弱。更重要的是,Claude 是被给予了这些上下文,而不是自己找到的。

但如果 Claude 可以在网上搜索,为什么不能搜索你的代码库呢?通过给 Claude 一个 Grep 工具,我们可以让它自己搜索文件并构建上下文。

这是我们观察到的一个规律:随着 Claude 变得越来越聪明,只要给它合适的工具,它在构建自身上下文方面就会越来越出色。

当我们引入 Agent 技能(Agent Skills)时,我们将渐进式披露(progressive disclosure)的理念正式化了,它允许 Agent 通过探索逐步发现相关的上下文。

Claude 可以读取技能文件,而这些文件又可以引用其他文件,模型可以递归地阅读。事实上,技能的一个常见用途就是为 Claude 添加更多的搜索能力,比如教它如何使用某个 API 或查询数据库。

在一年的时间里,Claude 从几乎无法自主构建上下文,发展到能够跨多层文件进行嵌套搜索,精确找到所需的上下文。

渐进式披露现在是我们在不添加工具的情况下增加新功能的常用技术。

渐进式披露 - Claude Code 指南代理

Claude Code 目前有大约 20 个工具,我们一直在问自己是否需要所有这些工具。添加新工具的门槛很高,因为这给了模型多一个需要考虑的选项。

例如,我们注意到 Claude 对如何使用 Claude Code 本身了解不够。如果你问它如何添加一个 MCP 或者某个斜杠命令的功能,它无法回答。

我们本可以把所有这些信息放在系统提示词中,但考虑到用户很少问这类问题,这会增加上下文腐化(context rot),并干扰 Claude Code 的本职工作:写代码。

相反,我们尝试了一种渐进式披露的方式。我们给了 Claude 一个指向其文档的链接,它可以加载文档来搜索更多信息。这种方法有效,但我们发现 Claude 会将大量结果加载到上下文中来寻找正确答案,而实际上你只需要那个答案本身。

所以我们构建了 Claude Code 指南子代理,当你问 Claude 关于它自身的问题时,会被提示调用该子代理。这个子代理有详细的指令,指导它如何高效地搜索文档以及返回什么内容。

虽然这并不完美——当你问 Claude 如何配置它自己时,它仍然可能会搞混——但它比以前好多了!我们能够在不添加工具的情况下,扩展 Claude 的动作空间。

是艺术,不是科学

如果你期望的是一套关于如何构建工具的刚性规则,很遗憾这篇文章并非如此。为你的模型设计工具既是一门艺术,也是一门科学。它在很大程度上取决于你使用的模型、Agent 的目标以及它所运行的环境。

多做实验,仔细阅读输出,尝试新事物。像 Agent 一样看世界。

原文: Lessons from Building Claude Code: Seeing like an Agent

One of the hardest parts of building an agent harness is constructing its action space.

Claude acts through Tool Calling, but there are a number of ways tools can be constructed in the Claude API with primitives like bash, skills and recently code execution (read more about programmatic tool calling on the Claude API in @RLanceMartin’s new article).

Given all these options, how do you design the tools of your agent? Do you need just one tool like code execution or bash? What if you had 50 tools, one for each use case your agent might run into?

To put myself in the mind of the model I like to imagine being given a difficult math problem. What tools would you want in order to solve it? It would depend on your own skills!

Paper would be the minimum, but you’d be limited by manual calculations. A calculator would be better, but you would need to know how to operate the more advanced options. The fastest and most powerful option would be a computer, but you would have to know how to use it to write and execute code.

This is a useful framework for designing your agent. You want to give it tools that are shaped to its own abilities. But how do you know what those abilities are? You pay attention, read its outputs, experiment. You learn to see like an agent.

Here are some lessons we’ve learned from paying attention to Claude while building Claude Code.

Improving Elicitation & the AskUserQuestion tool

When building the AskUserQuestion tool, our goal was to improve Claude’s ability to ask questions (often called elicitation).

While Claude could just ask questions in plain text, we found answering those questions felt like they took an unnecessary amount of time. How could we lower this friction and increase the bandwidth of communication between the user and Claude?

Attempt #1 - Editing the ExitPlanTool

The first thing we tried was adding a parameter to the ExitPlanTool to have an array of questions alongside the plan. This was the easiest thing to implement, but it confused Claude because we were simultaneously asking for a plan and a set of questions about the plan. What if the user’s answers conflicted with what the plan said? Would Claude need to call the ExitPlanTool twice? We needed another approach.

(you can read more about why we made an ExitPlanTool in our post on prompt caching)

Attempt #2 - Changing Output Format

Next we tried modifying Claude’s output instructions to serve a slightly modified markdown format that it could use to ask questions. For example, we could ask it to output a list of bullet point questions with alternatives in brackets. We could then parse and format that question as UI for the user.

While this was the most general change we could make and Claude even seemed to be okay at outputting this, it was not guaranteed. Claude would append extra sentences, omit options, or use a different format altogether.

Attempt #3 - The AskUserQuestion Tool

Finally, we landed on creating a tool that Claude could call at any point, but it was particularly prompted to do so during plan mode. When the tool triggered we would show a modal to display the questions and block the agent’s loop until the user answered.

This tool allowed us to prompt Claude for a structured output and it helped us ensure that Claude gave the user multiple options. It also gave users ways to compose this functionality, for example calling it in the Agent SDK or using referring to it in skills.

Most importantly, Claude seemed to like calling this tool and we found its outputs worked well. Even the best designed tool doesn’t work if Claude doesn’t understand how to call it.

Is this the final form of elicitation in Claude Code? We’re not sure. As you’ll see in the next example, what works for one model may not be the best for another.

Updating with Capabilities - Tasks & Todos

When we first launched Claude Code, we realized that the model needed a Todo list to keep it on track. Todos could be written at the start and checked off as the model did work. To do this we gave Claude the TodoWrite tool, which would write or update Todos and display them to the user.

But even then we often saw Claude forgetting what it had to do. To adapt, we inserted system reminders every 5 turns that reminded Claude of its goal.

But as models improved, they not only did not need to be reminded of the Todo List but could find it limiting. Being sent reminders of the todo list made Claude think that it had to stick to the list instead of modifying it. We also saw Opus 4.5 also get much better at using subagents, but how could subagents coordinate on a shared Todo List?

Seeing this, we replaced TodoWrite with the Task Tool (read more on Tasks here). Whereas Todos were about keeping the model on track, Tasks were more about helping agents communicate with each other. Tasks could include dependencies, share updates across subagents and the model could alter and delete them.As model capabilities increase, the tools that your models once needed might now be constraining them. It’s important to constantly revisit previous assumptions on what tools are needed. This is also why it’s useful to stick to a small set of models to support that have a fairly similar capabilities profile.

Designing a Search Interface

A particularly important set of tools for Claude are the search tools that can be used to build its own context.

When Claude Code first came out, we used a RAG vector database to find context for Claude. While RAG was powerful and fast it required indexing and setup and could be fragile across a host of different environments. More importantly, Claude was given this context instead of finding the context itself.

But if Claude could search on the web, why not search your codebase? By giving Claude a Grep tool, we could let it search for files and build context itself.

This is a pattern we’ve seen as Claude gets smarter, it becomes increasingly good at building its context if it’s given the right tools.

When we introduced Agent Skills we formalized the idea of progressive disclosure, which allows agents to incrementally discover relevant context through exploration.

Claude could read skill files and those files could then reference other files that the model could read recursively. In fact, a common use of skills is to add more search capabilities to Claude like giving it instructions on how to use an API or query a database.

Over the course of a year Claude went from not really being able to build its own context, to being able to do nested search across several layers of files to find the exact context it needed.

Progressive disclosure is now a common technique we use to add new functionality without adding a tool.

Progressive Disclosure - The Claude Code Guide Agent

Claude Code currently has ~20 tools, and we are constantly asking ourselves if we need all of them. The bar to add a new tool is high, because this gives the model one more option to think about.

For example, we noticed that Claude did not know enough about how to use Claude Code. If you asked it how to add a MCP or what a slash command did, it would not be able to reply.

We could have put all of this information in the system prompt, but given that users rarely asked about this, it would have added context rot and interfered with Claude Code’s main job: writing code.

Instead, we tried a form of progressive disclosure. We gave Claude a link to its docs which it could then load to search for more information. This worked but we found that Claude would load a lot of results into context to find the right answer when really all you needed was the answer.

So we built the Claude Code Guide subagent which Claude is prompted to call when you ask about itself, the subagent has extensive instructions on how to search docs well and what to return.

While this isn’t perfect, Claude can still get confused when you ask it about how to set itself up, it is much better than it used to be! We were able to add things to Claude’s action space without adding a tool.

An Art, not a Science

If you were hoping for a set of rigid rules on how to build your tools, unfortunately that is not this guide. Designing the tools for your models is as much an art as it is a science. It depends heavily on the model you’re using, the goal of the agent and the environment it’s operating in.

Experiment often, read your outputs, try new things. See like an agent.